The 2015 FRC competition has introduced a new ranking system, the biggest shakeup in the status quo since the 2010 season. The new “Qualification Average” replaces the old system of a “Qualification Score” based on W/L/T in Qualification Matches.

We thought it would be fun to model how this change would have impacted rankings at the District, District Championships, and Championship level in the 2013 season.

For fans of OPR, we have some good news. With the de-facto elimination of wins, losses, and ties – the ranking system serves as a rough approximation of OPR. Depending on the size of event and number of matches, your QA should correlate strongly with your team’s contribution. Smaller events with more matches will provide the best data, just as it did with the old system.

- 36 team district event, 12 matches = max of 24 unique partners, 66.6% of event teams

- 75 team championship field, 10 matches = max of 20 unique partners, 26.6% of event teams

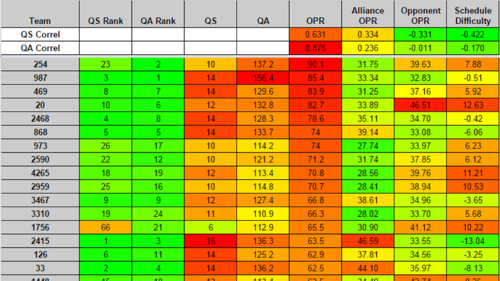

I included data that includes the average OPR of each team’s alliance partners and opponents (Alliance OPR and Opponent OPR) along with a rough approximation of schedule difficulty (Opponent OPR minus Alliance OPR). It’s an imperfect way to look at which teams might have had easier (or harder) rides through qualification matches, but it can’t account for “stacked” matches throwing off the average. For example, one match against a trio of powerhouse robots could make your schedule look more difficult than it actually was.

I’ll look primarily at the top 10-15 teams ranked, though the overall accuracy of the ranking system is now more important in the district system.

2013 Hatboro Horsham: 37 teams, 12 matches per team.

The new QA system correlates very strongly with OPR (0.972), significantly less so with the QS system that was in place (0.744). QS has a slight negative correlation with Opponent OPR and Schedule Difficulty (the weaker your opponents, and the easier your schedule was, the higher your QS was). This effect is reduced using the QA method (since your opponents no longer matter at all).

Looking at changes in the rankings, 816 drops significantly from 2nd to 13th, and 103 jumps from 17th to 2nd. 103 had the 2nd hardest schedule at the event, with the 3rd lowest Alliance OPR. 816 had both the easiest schedule and the lowest Opponent OPR by a large margin. So we’re seeing some bad (and good) luck in scheduling being erased.

2013 MAR Championship: 49 teams, 12 matches per team.

Amazingly, almost the same correlation numbers as we saw in Hatboro, OPR has a very strong correlation with the new QA system (0.973), and a weaker one with the old QS system (0.741).

A few big changes here, 1279 drops from 5th to 21st and 1923 jumps from 22nd to 3rd. Oddly, 1279 had a mildly hard schedule (+1.19), and 1923 had the 6th easiest schedule (-2.84). The biggest movement was 4460 going from 8th to 38th. They had the 9th highest Alliance OPR, and the 5th lowest Opponent OPR, giving them the easiest schedule at the event.

Overall not quite as clean, but we do see some movement. OPR’s were very narrowly clumped past the top ~5 teams at the event, so it’s probably not too worthwhile splitting hairs there.

Archimedes: 100 teams, 9 matches per team.

Correlation with both metrics takes a big drop on the Archimedes field, as 9 matches with 100 teams was simply not enough. OPR correlation with QS was only 0.631, while correlation with QA is a much better 0.875.

There’s a bit too much schedule influence here to make many strong statements, as an extra loss or two has a much bigger impact, but subjectively the new system does a much better job with the top 10-20 teams here. Team 254 serves as a particularly good example of where the new system can yield improvements, as they jumped from 23rd to 2nd.

Content/Analysis Provided By: Scott Meredith (Mentor FRC2590)

Data File: Google Drive beyondinspection: QS vs QA