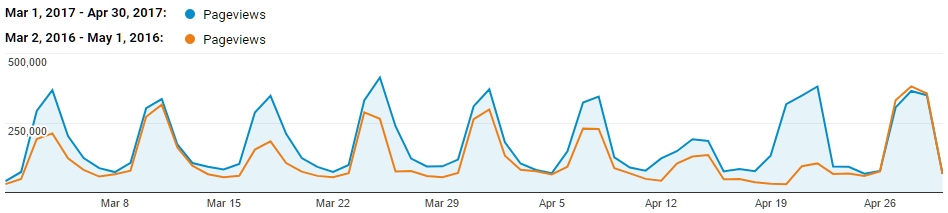

410+ K web page views. 350+ K API requests received*. 110+ K notifications sent. That’s how much load the TBA servers experienced on a typical Saturday during the competition season in 2017. Here’s a look at how The Blue Alliance is able to scale to meet demands while keeping running costs low. In short: TBA uses Google’s scalable web platform and a whole lot of caching.

* The number of API requests are higher than this, but due to caching, our servers only see and track a fraction of the number of requests made. More on this later.

Google App Engine

The main backend for The Blue Alliance runs on Google App Engine (GAE), a fully managed, highly scalable cloud platform. This gives developers the freedom to spend more time implementing features rather than managing servers and other infrastructure — very beneficial for a community-driven project like TBA.

To start, let’s go over how GAE works at the highest level. Server code runs on an instance, which can be thought of as a small server computer (in reality it’s more like a time shared virtual machine) that runs at 600 MHz and has 128 MB of memory — much less powerful than a laptop from even 10 years ago. When an HTTP request (page view, API call, etc.) comes in, the instance makes the appropriate database queries needed (e.g. fetch all of the matches, awards, and teams from a particular event), renders the response (e.g. formatting an HTML page or generating API JSON), and then outputs it.

A single instance has a limited number of requests it can serve in a given time. For example, if querying the database and rendering a web page takes 0.1 seconds, and we don’t a user to have to wait more than 1 second for a page to load, receiving more than 10 requests per second would degrade user experience. This is where GAE’s automatic scaling comes in. The same server code is automatically deployed and run on more instances if the traffic demands it and fewer instances if traffic decreases. In the same example, if there are 100 requests per second, GAE automatically scales to 10 instances which are now able to handle the load in parallel. Since the TBA database is mostly read-only (most user-facing pages and API endpoints access data, but don’t write data), it doesn’t face many concurrency issues that can slow down or deadlock the database (an issue faced by FIRST during event registration). That makes scaling quite straightforward — add more instances that read from the shared database to handle pending requests.

However, cost now becomes an issue. Each request incurs some cost in the database read and some cost in instance hours (“CPU time”) for rendering. Ideally, if the same request is made (either by the same user or different users) and little or no data has changed, database reads and instance hours should be a minimized. This is where multiple layers of caching come in.

Caching Part 1: Database Query Cache

The Blue Alliance stores each match, team, award, event, etc. as a separate entity. This enables complex queries such as “fetch all of team 254’s matches in 2017” or “fetch all Chairman’s awards from all Championships.” However, GAE charges for database reads based on the number of entities retrieved. Fetching all matches, teams, and awards for one event is easily over 100 entities — even for small events — which becomes very expensive, very fast. Enter TBA’s Database Query Cache (DQC), which stores the results of common queries in the database as a single entity. Examples of such queries include “all awards in a given year,” “all matches from a given event,” and “all a given team’s awards from a given year.” Now, if data has not changed, fetching all matches, teams, and awards for one event is no longer over 100 entities — it’s just three.

An additional benefit is that the DQC is shared between all parts of the site. If one user warms an event’s matches cache by visiting a web page, another user calling the API for the same event’s matches will use the same cached result!

Cached values have an infinite lifetime and are automatically cleared if any data that makes up the cached result changes. Details about how it works may be a topic for a future Tech Talk.

Caching Part 2: Rendered Response Cache

While the DQC reduces the costs associated with database reads, the cost for instance hours remains largely unchanged, since the response still needs to be rendered. Fully rendered responses (e.g. HTML pages) are cached using GAE’s Memcache service, which is free-to-use but has limitations on total size, and values are not persistent and can expire at any time.

Due to the complex nature of rendered responses, which draw from many different sources in the database, cached rendered responses expire after defined periods of time since it is difficult to track which specific caches need to be cleared after a database update. The cache times are set to be short for teams and events that are currently competing, and long otherwise. This is the primary reason for why we say “it’s a caching problem” when pages are out of date.

Caching Part 3: Edge Cache & Cache-Control Headers

Now that we have cached both database queries and rendered responses, server costs should be dramatically lower. In the best case, a page that used to have 100+ entity database fetches and associated render time is now a single free Memcache and output, using fewer instance hours in the process. Can we do better? What if we could use zero instance hours? How can we serve a page without our server doing anything? The answer is in Edge Caches.

Edge Caches can be anywhere along the network from the GAE data center to the user. TBA utilizes two primary Edge Caches — one built into GAE and another provided by Cloudflare. By setting Cache-Control HTTP headers to identify that a page is public and expires after a certain amount of time, the Edge Caches will save and serve the page for anyone that visits the same page without TBA seeing any server load. Because the Edge Caches are outside of TBA server control, expiration times are set to be only 61 seconds in most cases so incorrect data won’t be shown for extended periods of time.

Edge Caches are the reason why TBA doesn’t have accurate numbers on API usage; one API endpoint can be called hundreds or even thousands of times in 61 seconds, but if edge caches are functioning at their best, TBA will only see and track one.

Putting It All Together

The end result is an architecture that provides better responsiveness to users and is relatively inexpensive to run. The layers of caching reduce page load times that would be over 1 second to under 50 milliseconds in the best case. The entire service costs ~$10 on peak days during competition season and can be much cheaper than $1 on most days in the offseason.

[…] in a previous tech talk, we described how multiple layers of caching enables TBA to scale while keeping costs low. While […]

Reblogged this on APILama.