Here at The Blue Alliance, we love automation. The way we push new code for the site is no exception. But it wasn’t always that way. Even though we have been using continuous integration (an automated way to run tests on the codebase to ensure that proposed changes won’t break everything) for a long time, our deploy process has always had many manual steps. An admin would pull the latest changes from GitHub onto their local machine and run a script that would build all the necessary artifacts and push them to Google App Engine. This approach worked well when a limited number of people were pushing the site. However, it became harder and harder to maintain a similar environment between multiple admins’ local computers. At times, changes to project dependencies wouldn’t get replicated everywhere and someone would unintentionally push a broken build. It soon became clear that the manual approach was no longer working for us.

Travis CI Setup

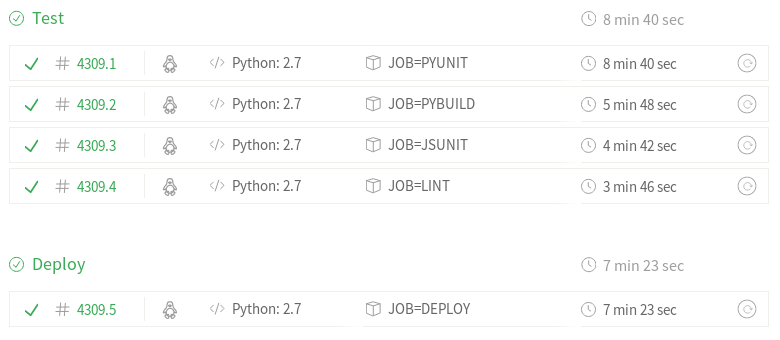

Finally, last May, Travis CI (the service where we run our continuous integration jobs) launched “build stages“, the last thing in the way of building a way to automatically push new code to thebluealliance.com. This allowed us to begin building a way to continuously deploy our code without any human input. The whole process takes about 15 minutes from a commit being pushed to GitHub to the deploy being complete.

Travis CI’s build stages allowed us to start a deployment job if and only if the existing test suite passed. This is exactly what we need for continuous deployment – we only way to deploy a commit that has proven itself to be “good” and passed the tests.

The Core Problems

From here, you might think that all we have to do is give the worker script deployment authorization and run “gcloud app deploy” a few times. Unfortunately, it’s not that simple. We want The Blue Alliance to be a reliable website, so there are a few additional things we need to consider:

- We only want to be pushing a single commit at a time, two deploy jobs for different commits shouldn’t “fight for control”

- Travis makes no guarantee on the ordering of jobs. We don’t want to push an older commit that what is already deployed

- There are times we want to deploy as fast as possible. If there is a bug in the code and something is broken, we want to get the fix out ASAP.

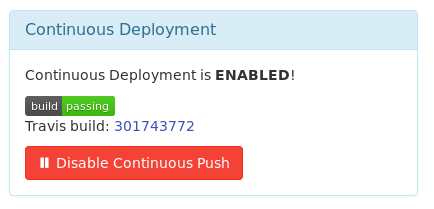

- In case things are really broken, we want to be able to disable automatically pushing commits

- In case things are really, really broken, we need to preserve the ability for an admin to deploy from the command line

Concurrency

The first bullet point is a concurrency problem, one of the most difficult problem spaces in all of computer science. We want to make sure only one commit is ever deploying at a given time (if two commits get pushed in rapid succession, their deploy phases should still execute serially). We can solve this with a mutex, a synchronization primitive that only allows one thing to execute at any given time. Perfect. Now, we’ll implement a mutex using shared memory, specifically a Google Cloud Storage bucket. GCS objects have strong read-after-write consistency, which is important for implementing a lock. This means that as soon as an object is created, every other process reading that object immediately sees the results (the alternative model is eventual consistency). Finally, we can introduce semantics where we only create the lock file if it doesn’t already exist. If the file create fails, then the file already exists, which means someone else is holding the lock. In that case, implement an exponential backoff to wait for the lock to become available and try again. We use this open source implementation of a Google Cloud Storage backed mutex to ensure serialization of deploy jobs.

Commit Ordering

Next, ordering. A to-be-deployed commit should only overwrite an older (not newer) commit. Thankfully, we can use the underlying git repository and commits’ timestamps to know whether or not a given commit hash is older or newer than the commit we’re trying to deploy. The APIv3 status endpoint contains information about the commit currently deployed to production. From that, we can extract the commit’s timestamp and compare it to the one we’re trying to deploy.

Disaster Recovery

The remaining items on the list all fall into the same category: disaster recovery. This continuous deployment system should fail gracefully when it can and allow for admins to manually intervene in other cases. First off, we can include a special tag in a commit message to bypass all the test jobs and skip directly to the deploy step – this will allow critical bugfixes to be deployed ASAP. Next, if we know that there are commits coming up that we should not deploy, the TBA Admin Panel has a “killswitch” to disable continuous deployment. This data is also exposed over the APIv3 status endpoint. However, this fails “closed” (if the TBA website is down, then the APIv3 request will fail, which prevents a commit from being deployed). In those cases, an admin still can deploy the site from a local machine, using the same scripts that Travis runs.

Conclusion

In conclusion, continuous deployment has been a big improvement for The Blue Alliance. We can release new code faster and more reliably than before and in a way that doesn’t require human intervention. In the process, we were able to prepare for the worst and learn a thing or two about interesting computer science techniques. If this kind of thing interests you, we’re always looking for more people to help contribute to TBA’s tooling – drop me a line and I’ll be happy to help you get started contributing!

[…] Our React Native repo will automatically publish a zip file of the compressed views (you know how we love CI/CD) to Firebase […]