Background

In my most recent post, I investigated what would be the best generally applicable “Strength of Schedule” metric. I settled on something I’m calling “Average Rank Difference” which is just the sum of all opponent “ranks” minus the sum of all partner “ranks” plus a few adjustments to normalize between events. Now, what we decide to use for ranks could be anything, but it has to be something you have available before the event starts, so you can’t use each team’s seeding rank at the end of the event you are analyzing for example. Possible options could be: Team number, previous events/years winning record, previous district points, previous event ranks, Elo, OPR, etc… For the analysis in this article, I’m going to be using Elo since I have it readily available, and I think it’s generally better suited for questions like this than any of the alternatives.

Introduction

What I want to do now is to take this metric and apply it to every schedule of the 3v3 era of FRC and see what we can learn. Normalizing across years/events is difficult, but I’ve tried my best to create a metric that works reasonably well in all cases. There are a few data points I’m filtering out though. The first is teams/events that have an unreasonably low number of matches. The only event in this category is the 2010 Israel Regional, which had only 3-4 matches per team. There are also two teams that only had one quals match at an event that I’m throwing out, those are teams 46 at 2009 Granite State and 3286 at 2014 Central Washington University. I suspect they didn’t actually compete at these events. The other set of schedules I’m throwing out are the 2015 schedules. The nature of Recycle Rush made it so you really didn’t care almost at all who your opponents were in quals (and maybe even desired better opponents for the coop points). Strength of schedule was still very important this year, but it means something completely different than in years with W/L games. One last caveat, surrogate data doesn’t exist prior to 2008, so I’m opting to treat surrogates just like normal teams in all years for a consistent comparison. With all of that out of the way, let’s get into the results!

Results

I’ve uploaded a book titled Historical_Schedule_Strengths here. It contains two sheets, one that shows individual schedule strengths (higher numbers mean harder schedules and 0 is neutral), and one that shows event standard deviations in schedule strengths (lower standard deviation means schedule is more balanced). Play around with it, and let me know if anything looks horribly wrong.

Best/Worst Team Schedules

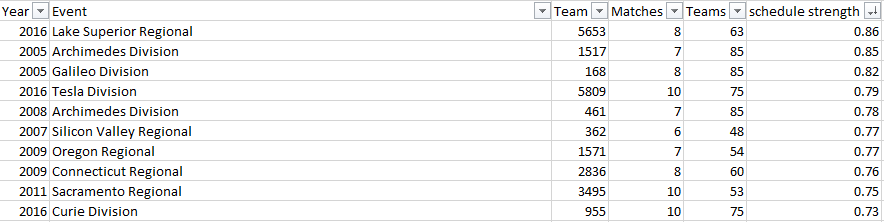

According to this methodology, here are the 10 hardest schedules of the 3v3 era:

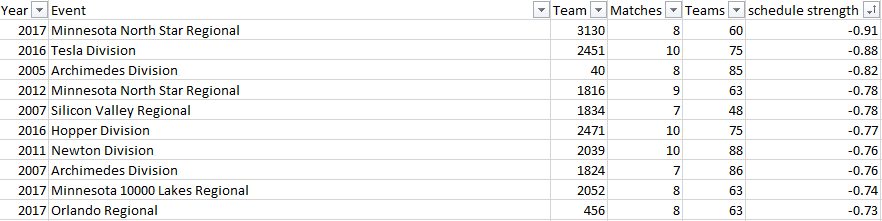

And here are the 10 easiest:

All entries on this list come from events which have either a large number of teams or a low number of matches per team. This makes sense because it is easier to get great/awful schedules in these scenarios, but more on that later. I find it really interesting that both the best and the worst schedules according to this methodology come from MN events within the past few years. I can try to offer some perspective on them since I was at Lake Superior 2016 and know the teams at North Star 2017 pretty well. Here’s a breakdown of 5653’s partners and opponents at Lake Superior 2016:

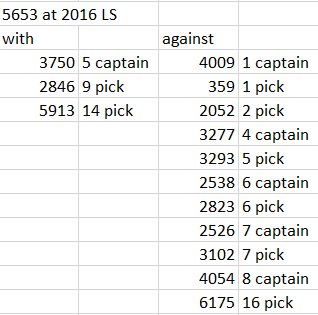

I don’t know if this is actually the worst schedule of all time, but I think it has to be the dumbest schedule I’ve ever personally seen. 4009, 2052, and 359 were a step above everyone at that competition, and 5653 had to face all of them in addition to a huge cohort of other really good teams who made the playoffs. Furthermore, their only partners that ended up making the playoffs were a low captain and a pair of second round picks. It should be noted that you play against 1.5 times as many teams as you partner with, but even so, 5653 had to play against 10X as many captains/first round picks as they got partnered up with.

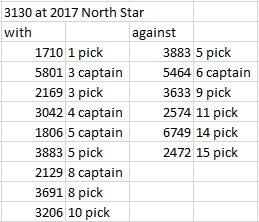

On the other end of the spectrum, we have 3130 in 2017. 3130 was so good in 2017 that I didn’t bat an eye when I saw that they seeded first at this event, but let’s do the same analysis of their schedule:

This one looks amazing for 3130. They didn’t get paired with the 1 captain because they were the 1 captain, but they still got a whole bunch of really great teams to work with. Even among the teams that didn’t make playoffs, there was a noticeable difference in team quality in favor of their partners, as I recognized many of their other partners as being solid teams.

Most/Least Balanced Events

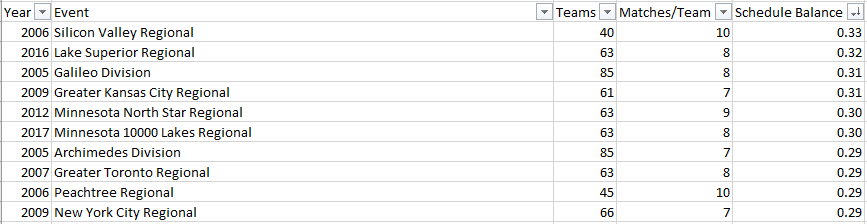

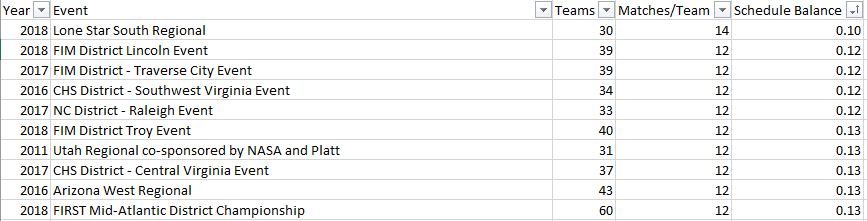

Likewise, here are the 10 least balanced events:

And here are the 10 most balanced events:

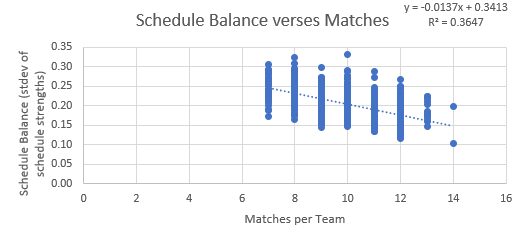

Unsurprisingly, the most balanced events all have a very high number of matches. The least balanced events all have either a low number of matches per team or a high number of teams. Here’s a graph which shows an event’s schedule “balance” versus the number of matches per team:

What this indicates is that going from 7 to 12 matches makes your schedule have about half as much variance on average. An exponential fit for this graph probably would make more sense if we had a bigger range, but a linear fit works just fine since the range is limited. Also, I wasn’t sure if I wanted to use stdev or variance, so I went with stdev even though I personally kind of like variance better.

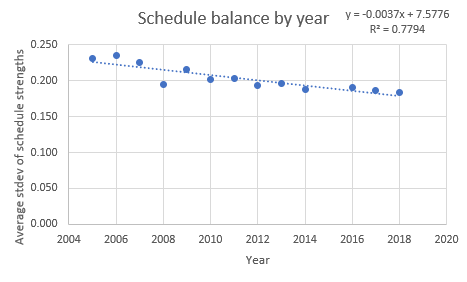

The other way I broke down the data was by year, here is a graph showing the average event schedule stdev for each year.

So the schedules generally have been getting better over time, I’m not sure what all the causes are, but my speculation is that the algorithm was worse pre-2008 (see the 2007 algorithm of death), and since 2009, the trend has been toward smaller events with more matches per team, which generally increases the overall schedule fairness as seen above. I’m also not sure if the number of attempted schedules created by the scorekeepers has remained constant since 2008, it’s possible that with the better computing power available now that more possible schedules are generated at each event which means fairer ones get selected.

Conclusions

This was a lot of fun to work on. “Strength of Schedule” is a term that gets tossed around quite a bit, but I think it means different things to different people. I enjoyed taking my best crack at quantifying it and applying it to every year. I also finally got around to fulfilling Travis’ wish 9 months later. Now the next step is to use this metric to create “balanced” schedules of some sort. So stay tuned for that.

May your dreams be filled with graphs,

Caleb

[…] will be the last in my three-part series of articles on schedule strengths. Here are parts one and two in case you missed […]