This is the third part of a three part blog series on analyzing ZEBRA MotionWorks (formerly Dart) data. Here are links to part 1 and part 2. This final post will focus on advanced insights we can get from applications of Zone Groups.

Background

In my last post, we built a tool called Zone Groups. For a quick refresher, zone groups are sets of field zones that can be used to describe a robot’s location in an intuitive and useful way. Zone Groups can contain as few as 1 and as many as all of the zones on the field, we can define them however we want based on our needs. In the last post we found the percentage of time each team spent in each zone group. This can be insightful for sure, but at the end of the day, those are just crude averages, we can do far more with zone groups than this. I’ve thought of a dozen different ways to use them, and I’m sure there are dozens more that haven’t even crossed my mind. For this article though, I’m restricting myself to 3 applications, auto routes, defense types, and penalties. We’re going to continue using my ZEBRA Data Parsing tool, so feel free to grab that and follow along.

Auto Routes

One of the first applications of zone groups that I thought of was to determine what paths teams take during the autonomous portion of the match. To define autonomous paths, first create the zone groups that are related to the path. Then, give your path a unique ID and a name describing the path. Finally, add an ordered list of zone groups to define the route. Each robot can only perform 1 auto route per match, so be careful with your definitions so that they don’t overlap. I’ve created 11 different autonomous routes, I’ll briefly describe them here.

The simplest route is a robot that does not cross it’s own HAB Line. Movement behind the HAB Line doesn’t matter much if you don’t cross it since there are no scoring opportunities back there. As such I’m grouping all routes that don’t cross the HAB Line together. The next simplest route is just crossing the HAB Line without moving into any scoring locations. Another easily defined route is for robots that cross into opposition territory. This is indicative of a G3 foul (more on that later) and is one of the worst routes a team can take.

Next, we have the single game piece routes. I’ve created one route each for the rockets and the cargo ship. I could have split these up more by exact scoring location or left/right, but opted not to for simplicity. It’s potentially worth tracking in future games but I decided it wasn’t worth it for this 2019 demo. Building on those two routes, I also have routes for teams that move into a scoring location and then move all the way back behind their HAB Line to collect another game piece.

Finally, we have the double game piece routes, these move first into a cargo/rocket scoring area, then back behind the HAB Line, then up to another cargo/rocket scoring area. Since there are 2 options twice, these create 4 distinct routes.

Full data on team routes can be found in the shared folder here. I’ll be summarizing a few teams’ routes below:

971:

| One Hatch on Rocket | One Game Piece on Cargo Ship | One Hatch on Rocket and retrieve another game piece | Two Game Pieces on Cargo Ship | One game piece on cargo ship and one one hatch on rocket |

| 15% | 15% | 8% | 54% | 8% |

971 has the highest rate of any team for attempting two game pieces on the cargo ship. Their next most common routes are just getting a single game piece on either the rocket or cargo ship. One time, they returned to get another game piece but presumably ran out of time before returning to score it. Another time, they attempted one game piece each on the rocket and the cargo ship.

1678

| One Hatch on Rocket | One Hatch on Rocket and retrieve another game piece | Two hatches on rocket |

| 10% | 20% | 70% |

1678 basically had one auto route and they stuck to it. They had the highest two hatches on rocket rate of any team at CC. Additionally, their next most common auto was just them placing a single hatch on the rocket and preparing another before time ran out. Only once in dataset did they only attempt a single hatch on the rocket without returning behind the HAB Line.

1619

| One Hatch on Rocket | One Hatch on Rocket and retrieve another game piece | Two hatches on rocket |

| 30% | 50% | 20% |

1619 has a similar set of routes as 1678. It seems their primary auto involved attempting to score two hatches on the rocket. In general though, they seem to lack 1678’s speed. Only twice did they complete this route. Half of the time, they managed to return back to retrieve another game piece. A handful of times though, they only seemed to manage a single game piece and did not return for another in auto.

There are lots of different ways to break down auto routes, I could definitely have defined more auto routes than I did. I’m not sure exactly what an optimal number of routes is for 2019 (or 2020 for that matter). I don’t want so few as to miss key distinctions in modes, but I also don’t want so many that it is overwhelming or that teams running the same auto can get grouped into multiple routes depending on slight positional variance. I’m feeling like 10 is a good general number to target, but we’ll have to evaluate each season.

Defense Types

Defense is a notoriously difficult thing to measure. Some years it is much clearer than others that defense is being played and who it is being played against. Fortunately, 2019 was one of those years. We can simply define a robot as playing defense if it is on the opposite side of the field as it’s drivers. This is due to all of each alliance’s scoring, loading, and movement locations being on it’s own side of the field, as well as the G9 restriction of only one opposing robot on your side of the field at a time. If all of your robots are on your own side of the field, then you have no defender. It gets a little bit trickier when we try to define which (if any) robots are being defended. Generally, we think of a robot as being “defended” if it is in close proximity to a defender. How close should they be though? I don’t know the right answer, so I’ve created a couple of different distances to compare.

Due to differing robot dimensions and varying placement of ZEBRA trackers, we can’t know definitively when two robots are contacting one another. However, the trackers will generally be close to centered on robots. We know the max frame perimeter to be 120″, meaning a square robot will have a length/width of 30″, or 2.5 feet, add on another 6 inches for bumpers and you get 3 feet. The closest distance for centered tags on a robot is if they are squared up with each other is thus 3 feet. The furthest would be if they were touching corners, which would be a distance of 3*sqrt(2) which is 4′ 3″. With these rough dimensions in mind, and based on my own viewing, I’ve decided to define two robots within 3.5 feet of each as contacting each other. There will be teams with long robots and non-centered tracker placements that can contact other teams without being within 3.5 feet of their tracker. There will also be small robots that get marked as contacting another team even though there is a noticeable gap between them. Until we get even better incoming data, those are just restrictions we will have to work around.

I’ve also made another defense type for non-contact but “tight” defense. I’m defining this as defense if one robot is a defender and the robots are within 6.5 feet of each other. I’ve also made one more defense type for “loose” defense, which is used if a defender is within 10 feet of an offensive robot.

Finally, I made a defense type called “general” for when a team is on the other side of the field at all. Since there is almost no reason to be on the opposing side of the field except for defense, I wanted to mark a robot as a defender even if there were no robots nearby to them.

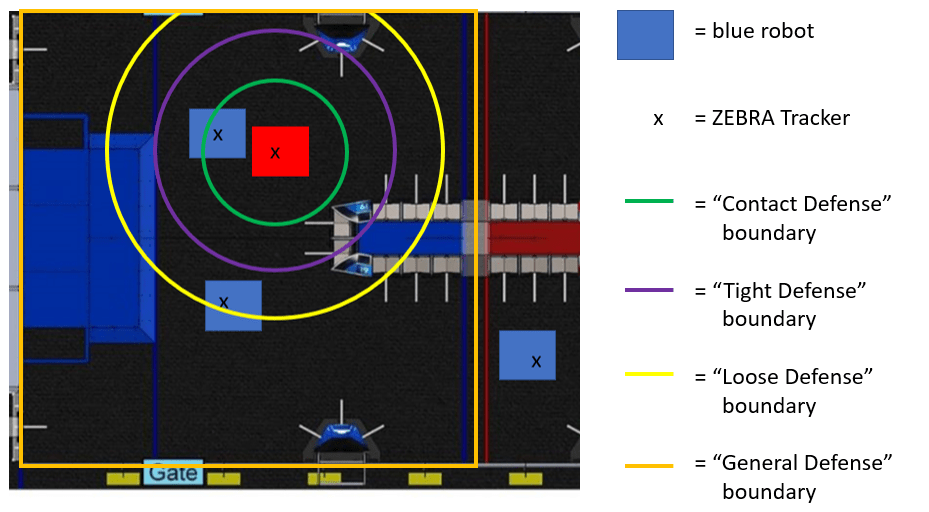

Here is a sketch of the field with a defensive robot’s various defensive areas on it:

As you can see, there are 3 blue robots and 1 red robot in this part of the field. The red robot is on the blue side of the field, so is categorized as a defender. There are 4 different boundaries for the different types of defense. The blue robot directly in front of the defensive bot is within all 4 boundaries, and will thus be considered to be defended all 4 ways. Note that even though the robots are not quite touching, since the blue robot’s tracker is within 3.5 feet of the red robot’s tracker, it is still considered “contact” defense. The other nearby blue robot’s tracker is within the “General Defense” and “Loose Defense” boundaries, so they will be classified as being defended both of those ways at this point in time. The remaining blue robot is on the red side of the field, and does not fall within any of the defensive boundaries, so they are not considered to be defended here.

Since you need good data on all 6 robots in order to effectively measure defense, I have thrown out matches in which any robots have poor data quality. This restriction means we will be looking only at matches 1, 5, 10, 12, 17, 24, 29, 30, 31, 33, 35, 40, 54, 58, 59, 64, and 67. This is a reasonably large, but very far from complete dataset of CC matches. Don’t worry though, for 2020 a much much higher percentage of matches will have good data quality, as I have been assured that we will be getting match start timestamps, which means I won’t have to guess on the match start times next season. In the good quality matches listed above, here was each team’s defense percentage:

| Team | Contact Defense | Tight Defense | Loose Defense | General Defense |

| 3218 | 19.0% | 43.1% | 60.5% | 70.8% |

| 5507 | 14.7% | 52.8% | 60.7% | 68.1% |

| 498 | 12.6% | 46.0% | 59.5% | 73.7% |

| 1671 | 11.3% | 34.7% | 51.2% | 68.8% |

| 5026 | 8.2% | 24.7% | 30.2% | 31.4% |

| 2102 | 6.0% | 17.3% | 21.2% | 25.9% |

| 2928 | 5.0% | 28.8% | 37.7% | 48.1% |

| 5700 | 3.5% | 15.6% | 21.4% | 35.0% |

| 604 | 3.3% | 5.9% | 8.6% | 14.9% |

| 2046 | 3.1% | 11.3% | 15.8% | 17.5% |

| 114 | 2.7% | 10.8% | 14.7% | 18.5% |

| 1868 | 2.5% | 33.5% | 54.0% | 78.9% |

| 5199 | 2.3% | 7.6% | 9.2% | 10.6% |

| 3476 | 2.0% | 9.0% | 13.0% | 17.8% |

| 1619 | 1.4% | 5.2% | 5.7% | 6.0% |

| 2733 | 0.9% | 4.0% | 5.3% | 6.7% |

| 3647 | 0.7% | 1.9% | 2.8% | 2.8% |

| 2659 | 0.5% | 1.0% | 1.1% | 1.1% |

| 2557 | 0.2% | 2.8% | 3.4% | 3.4% |

| 1710 | 0.0% | 0.0% | 1.7% | 1.8% |

| 1197 | 0.0% | 0.2% | 0.9% | 1.5% |

| 846 | 0.0% | 0.6% | 0.9% | 1.4% |

| 4183 | 0.0% | 0.9% | 0.9% | 0.9% |

| 1072 | 0.0% | 0.0% | 0.0% | 0.8% |

| 3309 | 0.0% | 0.7% | 0.7% | 0.7% |

| 973 | 0.0% | 0.2% | 0.2% | 0.2% |

| 254 | 0.0% | 0.2% | 0.2% | 0.2% |

| 2930 | 0.0% | 0.0% | 0.2% | 0.2% |

| 1983 | 0.0% | 0.0% | 0.0% | 0.1% |

| 649 | 0.0% | 0.0% | 0.0% | 0.0% |

| 971 | 0.0% | 0.0% | 0.0% | 0.0% |

| 5940 | 0.0% | 0.0% | 0.0% | 0.0% |

| 2910 | 0.0% | 0.0% | 0.0% | 0.0% |

| 4414 | 0.0% | 0.0% | 0.0% | 0.0% |

| 1678 | 0.0% | 0.0% | 0.0% | 0.0% |

| 696 | 0.0% | 0.0% | 0.0% | 0.0% |

There were 5 teams with “general defense” percentages above 50%: 3218, 5507, 498, 1671, and 1868. Of those, 3218 played by far the most contact defense, and 1868 played the least. Interestingly, 5507 played more tight defense than 3218 even though they had less contact. It’s tough to draw definite conclusions on such a small dataset, but perhaps 5507 preferred to hang back a little bit more in a “zone” type defense than to get right-up in the offensive team’s face like 3218. 1868 spent 25% less time playing loose defense than general defense, which is notably lower than the other teams. This is likely due to their getting knocked out while playing defense with 65 seconds left in the match in q33.

Flipping things around, here’s each team’s defended percentages:

| Team | Contact Defended | Tight Defended | Loose Defended | General Defended |

| 4414 | 11.7% | 37.8% | 45.8% | 52.9% |

| 1619 | 9.0% | 29.0% | 36.8% | 79.1% |

| 3476 | 8.5% | 21.6% | 29.5% | 53.3% |

| 846 | 7.4% | 27.2% | 41.5% | 60.3% |

| 971 | 6.7% | 12.3% | 18.2% | 54.7% |

| 5507 | 5.1% | 7.5% | 8.2% | 8.6% |

| 5199 | 4.8% | 12.7% | 15.6% | 37.5% |

| 2930 | 4.8% | 16.7% | 19.4% | 22.9% |

| 254 | 4.3% | 18.6% | 26.0% | 37.9% |

| 3309 | 4.1% | 17.9% | 25.1% | 43.4% |

| 1678 | 4.0% | 18.0% | 26.1% | 35.6% |

| 3647 | 3.1% | 17.7% | 22.7% | 52.8% |

| 1868 | 3.0% | 6.2% | 7.3% | 8.5% |

| 2046 | 2.9% | 6.6% | 10.6% | 24.7% |

| 2659 | 2.7% | 6.8% | 10.7% | 41.6% |

| 973 | 2.2% | 6.8% | 12.9% | 33.0% |

| 604 | 2.0% | 4.8% | 7.6% | 33.4% |

| 2928 | 1.7% | 4.9% | 6.5% | 22.1% |

| 2733 | 1.7% | 11.4% | 16.3% | 36.9% |

| 498 | 1.7% | 7.7% | 8.7% | 10.2% |

| 1710 | 1.5% | 21.0% | 36.0% | 85.2% |

| 4183 | 1.3% | 6.3% | 13.1% | 41.1% |

| 2102 | 1.0% | 5.0% | 6.7% | 30.6% |

| 1983 | 0.9% | 11.4% | 20.3% | 40.1% |

| 2557 | 0.8% | 2.7% | 4.4% | 62.4% |

| 2910 | 0.4% | 2.1% | 6.2% | 47.6% |

| 5940 | 0.3% | 1.1% | 4.0% | 43.5% |

| 5026 | 0.2% | 6.7% | 8.7% | 27.4% |

| 3218 | 0.2% | 0.9% | 2.9% | 17.2% |

| 5700 | 0.2% | 2.7% | 3.4% | 3.8% |

| 696 | 0.1% | 1.4% | 3.3% | 42.2% |

| 1671 | 0.0% | 0.8% | 1.6% | 2.4% |

| 114 | 0.0% | 0.2% | 0.4% | 6.0% |

| 1197 | 0.0% | 0.3% | 3.2% | 32.7% |

| 1072 | 0.0% | 0.0% | 0.0% | 0.0% |

| 649 | 0.0% | 1.7% | 14.5% | 35.5% |

4414 is the most heavily defended team in every category except for general defense. We can’t really say much with this small of a dataset, but with larger datasets I would be really curious to see the difference between tight and loose defense for teams. If a team has similar tight and loose defense amounts, that might mean they are not able to put separation between them and the defender. A high “loose defense” percentage and low “tight defense” percentage in contrast might mean that another team is trying to defend you, but they can’t keep up and are easily shaken. It’s very speculative at this point, but we’ll learn a lot more as 2020 data comes in.

Penalties

Many penalties each season are based on field zones in which one alliance is restricted in some way. This may be a penalty given anytime a team enters an area, but often there are time-period specific zone restrictions. Additionally, some penalties are only given if there is contact between offensive and defensive robots. Occasionally, we’ll get other restrictions such as no more than 1 robot of the same color in a zone, as it was in 2019 with G9. I’m grouping all of these together under the “Penalties” umbrella and have created a tab in my data parser to analyze these types of penalties. These are very easy to define, just pull up a manual and answer the questions in the sheet. Here are the penalties I was able to take a crack at measuring with ZEBRA Data (contact is defined as a distance of <3.5 feet as it is for contact defense):

G3: No traveling onto opponent’s side during auto.

G9: Only one robot on opponent’s side of the field at any time.

G13 Foul: No contacting a robot behind it’s own HAB Line before the endgame

G13 Automatic L3 Climb: No contacting a robot behind it’s own HAB Line in the endgame

G16: No contacting an opponent’s tower in the last 20 seconds if an opposing robot is nearby

In addition to these definitions, we can define a “cooldown” period, during which a team may not receive the same penalty twice. G3 has an infinite cooldown since you can only receive the penalty once per match. G13 Automatic L3 Climb and G16 have no explicit cooldown in the manual, leading me to believe they can also only be awarded once per match. I will give these an infinite cooldown as well. G13 is interesting, as it doesn’t have a specified cooldown in the manual. Since I don’t think an infinite cooldown is reasonable for this one, I have opted to set it at 5 seconds. This is the standard cooldown for penalties that can be awarded multiple times, and likely referees will call penalties at about this rate.

With all of those definitions, let’s go through all of the penalty violations called by either my parser or refs (we are again restricted just the matches we used for defense types):

G3: There is only one instance of G3 that my parser found, which comes from 973 in q29 at 1 second remaining in sandstorm. The refs did not call this penalty.

G9: This would have been by far the most common violation of these 5, with 26 violations in just 17 matches! However, the G9 rule was changed at Chezy Champs to be less restrictive, which means there were far fewer actual calls in those matches than there would have been under the original rules. The only G9 calls by refs that I saw were a possible call in q31 on 3647/3218 at 0:57 remaining, and 2 very probable calls on 2733/1868 in q33 between 0:40 and 0:01 remaining. All of these were also identified by my parser.

G13 Foul: Besides G9, this was the next most common foul by a solid margin. In q5, my parser agrees with the refs in calling a G13 on 1671 with 0:54 remaining. In q29, my parser calls two G13s on 3476, one at 1:45 and one at 1:32 remaining. I think the refs correctly no-called both of these. In q33, my parser calls a G13 on 1868 at 1:07 remaining, also a good non-call by the refs. In q40, my parser calls a G13 on 5026 at 1:43 and the refs do not, this one is incredibly close and could be reasonably called either way based on the video. Finally, in q64, both my parser and the refs call foul on 604 at 2:05 remaining. The refs call an additional G13 on 604 at 1:16 that my parser does not call, this is another incredibly close one that could probably be called either way.

G13 Automatic L3 Climb: This only happens once in dataset by 1619 in q10. My parser and the refs agreed.

G16: My parser calls this twice in total. The first is in q31 by 3218 at 0:20. I think this is a good non-call by the refs. The second is in q33 by 1868 at 0:20, there are a lot of penalties near the end of this match on red, so it is difficult to say if this specific penalty was called by the refs or not.

For a first pass, I think my parser does a pretty good job of calling fouls. It’s not perfect by any means, but it should be able to get the general idea of penalties. In the future, you could even sync the predicted penalties up with the FMS-reported penalty scores. Then you could keep throwing out the least likely penalty until there are an equal number of predicted penalties and actual penalties.

Summary

We’ve covered three advanced applications of zone groups in this post. Auto routes let you class how a robot moved around the field during the autonomous period of the match. Defense Types lets you know how teams play defense, and how teams get defended, based on how close offensive and defensive robots are too each other and where on the field they are interacting. Finally, penalties let you know when and where teams are likely receiving positional and contact based fouls.

And with that, we are at the end of this blog series! Thanks for tuning in. These metrics and the ones in the previous posts are really only scratching the surface of what is possible with these data, so we’re not by any means done. We’ve definitely taken some big steps though, and laid a solid foundation for future analysis. My hope is that with this series, I’ve gotten all of you readers’ minds racing with possibilities. For 2020, one use that immediately comes to my mind is tracking robots moving through the trench, as doing this indicates whether your robot is short or tall. I’m sure you all have other ideas though, so let me know what you’d like to see in the 2020 parser and I’ll do my best to get them added in before competitions start!

Good luck this season,

Caleb